According to a recent CRO Oversight Benchmark Survey, 90% of ClinOps leaders plan to increase their use of CROs in the coming year, yet only 22% felt confident that their CROs would achieve milestones on time.

With impending changes necessary to address ICH E6 (R2), oversight is top of mind and requires sponsors to proactively answer questions such as:

- “Is my vendor doing what I hired them to do?”

- “Is the CRO adhering to my quality plan?”

- “Is the CRO’s performance meeting our expectations?”

- “What issues should we be escalating?”

Join Linda Sullivan of Metrics Champion Consortium (MCC) and Julie Peacock of Comprehend Systems for an in-depth look at CRO oversight and risk management best practice. Their presentation delves into the gaps in oversight processes, the causes of these gaps and how to successfully address them.

Attendees will learn from MCC’s pivotal work on how to establish effective metrics for managing oversight, determine the most important metrics, and then report results at the appropriate oversight level.

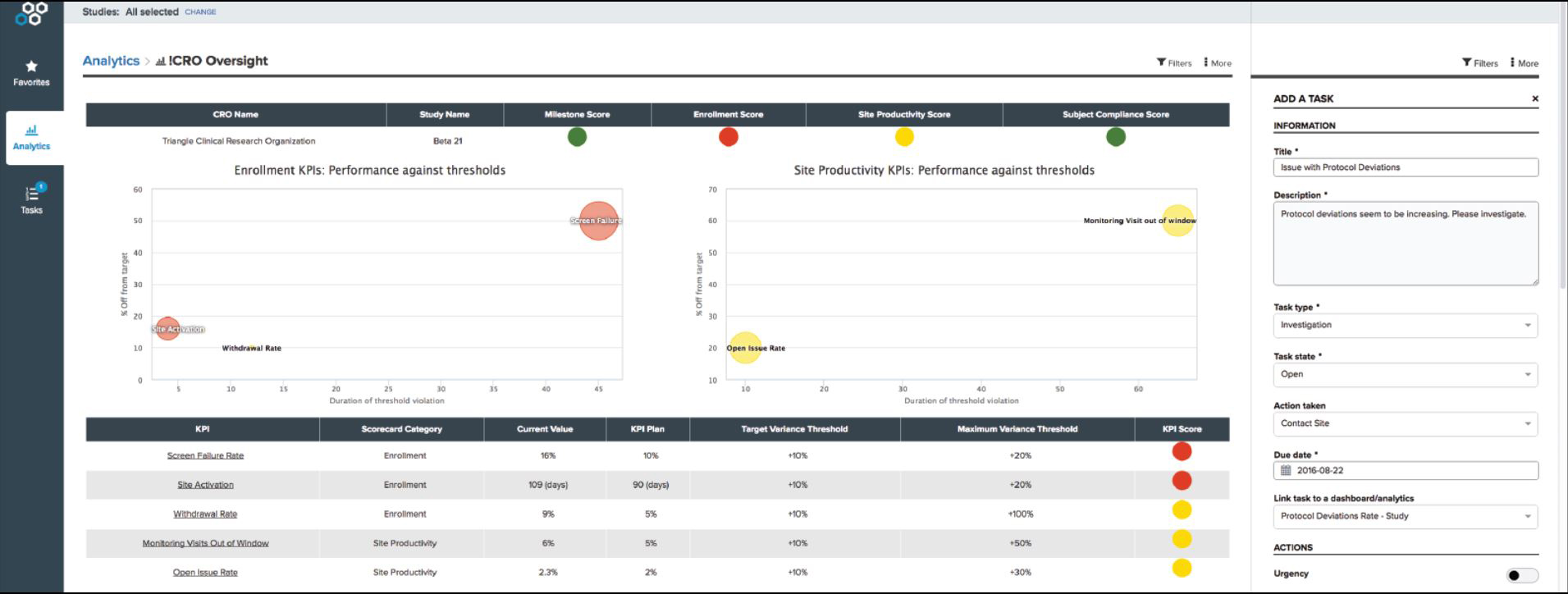

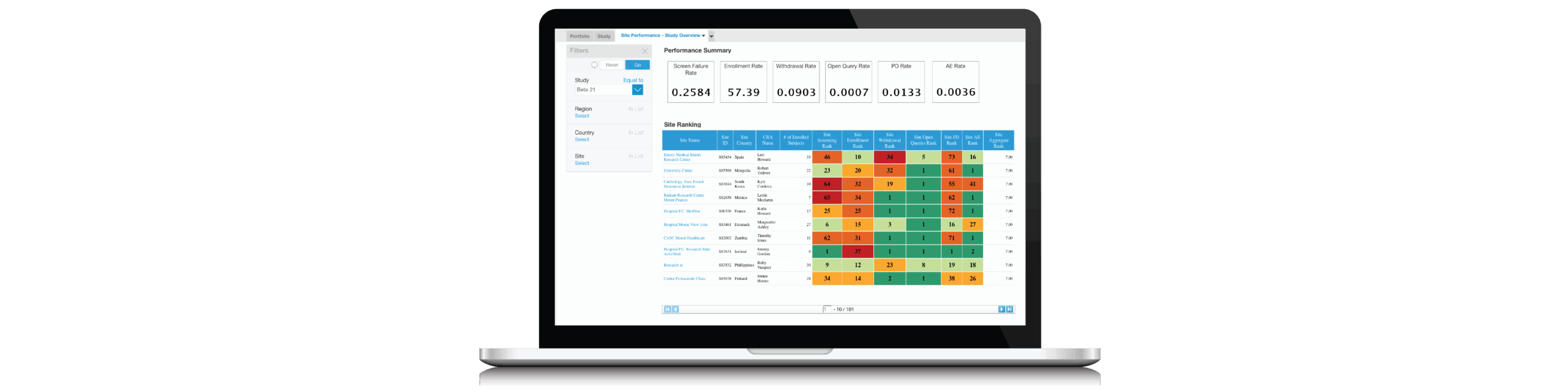

Additionally, attendees will see a live demonstration of how leading sponsors collaborate with their CROs for early detection and threshold control using automated dashboards and best practice KPIs.

With real-time, accurate dashboards and metrics, sponsors can increase their speed to a quality result.

Speakers

Julie Peacock, Client Services, Comprehend

Closely aligned with customers and the sales organization, Julie focuses on enabling prospects and customers on Comprehend’s Clinical Intelligence solutions. She manages go to market strategy, sales enablement, and product marketing.

Prior to Comprehend, Julie spent 18 years at Oracle Corporation in the enterprise application space where she managed strategy, field enablement and launch activities for a series of BtoB solutions. Julie holds a bachelor’s degree in Marketing from Auburn University.

Linda Sullivan, Co-Founder & President, Metrics Champion Consortium (MCC)

Ms. Sullivan is Co-Founder & President of the Metrics Champion Consortium (MCC), an industry association dedicated to leading the drug-development enterprise in the adoption and utilization of standardized metrics and benchmarks to drive performance improvement. She has been a featured speaker at Performance Metrics, Risk-Based Monitoring, Quality Management & Clinical Trial Oversight industry meetings.

Ms. Sullivan received a B.S. in Biology from Trinity College and a M.B.A. from Dartmouth College where she was named a Tuck Scholar.

Who Should Attend?

Clinical Operations and Data Management Professionals

- Clinical Trial/Clinical Study Management

- Clinical Data/Informatics/IT

- Clinical Outsourcing

Clinical Research, Technology and Business Professionals

- Biometrics/Biostatistics

- Business Technology/Applications/Solutions

- Business Analyst

- CTO

- Project Management

Xtalks Partners

Comprehend

Comprehend offers a suite of Clinical Intelligence applications that enables ClinOps Execs, Data Managers and Medical Monitors to significantly improve the speed, safety and quality of a portfolio of clinical trials. Across studies, sites, systems and CROs, Comprehend’s Clinical Intelligence Suite is particularly effective for centralized monitoring, risk monitoring, CRO oversight and collaboration, and medical monitoring initiatives. Comprehend gives life sciences companies a new source of competitiveness and the confidence to deliver high quality trial submissions at a new speed. Comprehend: the speed to quality results. Learn more at www.comprehend.com

MCC

Founded in 2006, MCC is the leading industry association dedicated to the development of standardized performance metrics to improve clinical trials. MCC provides the collaborative environment for biopharmaceutical and device sponsors, service providers and sites to improve clinical-trial development through use of MCC standardized performance metrics. white paper Based on MCC membership requests, MCC has built a Benchmarking Database and reporting tool for use with MCC’s metrics portfolio. Members who choose to participate in the MCC Benchmarking Database can load in their own MCC metrics data, can track their own performance over time, and compare their data to aggregated, anonymized data from their peers.

You Must Login To Register for this Free Webinar

Already have an account? LOGIN HERE. If you don’t have an account you need to create a free account.

Create Account